Making Music In Time: (click on to read)

A Real Time Interactive System For Composition And Performance

Abstract:

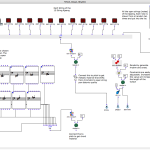

This thesis presents an interactive system designed and programmed by the author to enable a performer to interact in real time with resynthesized and processed versions of the input sounds he/she produces. Specifically, it looks at how the author uses a modified electric-acoustic guitar with the interactive system to compose and perform music in real time. Technical details of both hardware and software are explained. Several musical applications of the program are described including composition of a studio piece, and live real time performance. The thesis focuses upon the ways in which the interactive system motivates and rewards performative musicianship.

A hexaphonic pickup built by Paul Rubenstein was mounted in the sound hole of an acoustic guitar for highly accurate detection of onset time

Premiered at the grand opening of the Digital Arts Research Center at UC Santa Cruz April 29th, 2010

protoTable:

protoTable is UC Santa Cruz’s version of the reacTable, an electronic tabletop musical instrument. By manipulating objects, which are visible to a camera inside the table, a performer creates a musical composition in real-time, while also generating images upon the table’s surface. The software, however, can be adapted to control anything a user desires.

Built by: Philip Lamperski, Peter Elsea, Rupa Dhillon, & Jesse Clark

The protoTable was unveiled at UC Santa Cruz’s Electronic Music Concert in May, 2009 and now resides in the Electronic Music Studios.

Explanation and Demonstration of Visuals:

Interior and Surface of protoTable:

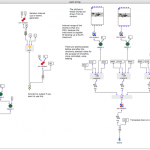

Idiomatic Algorithms:

The screenshots below depict the idiomatic algorithms I coded using the Open Music visual programming environment. The algorithms generate idiomatic music for the Korean instruments, Ajaeng and Gayageum (12 string Korean zithers). I used these algorithms to assist me in composing “Gradual Shifts” for Ajaeng and Gayageum. “Gradual Shifts” was performed by Sang-Hun Kim of Contemporary Music Ensemble Korea and Ji Eun Lee, and premiered at the Pacific Rim Music Festival in 2010 at Seoul Korea and in Santa Cruz, CA.

Dynamic Music Engine For Video Games:

Currently, I am working with colleague and friend Daniel Brown on creating a dynamic music engine for video games. This system involves generating appropriate musical gestures for game events. In the fall of 2010, a prototype of this system was successfully presented at UCSC Baskin School of Engineering and at Crystal Dynamics in Redwood City, CA.